Author

Incresco Team

Incresco Editorial

Subject Matter Expert

Content reviewed by Incresco's AI & product specialists.

Published

2025-04-01

Imagine shopping online, finding the perfect outfit, but having no idea how it would actually look on you. Clunky virtual try-on tools might stretch or distort the clothing, leaving you guessing — and underwhelmed.

For developers building fashion tech or e-commerce experiences, creating a realistic, flexible virtual try-on has always been complex… until now.

Enter OOTDiffusion — a smart, diffusion-based system that makes generating lifelike try-on visuals as seamless as uploading a photo. No stretching, no awkward fits. Just crisp, high-quality results that actually look like you’re wearing the outfit.

In this post, we’ll break down how OOTDiffusion works and why it’s a game-changer for fashion apps, online retail, and content creation.

Why OOTDiffusion?

Creating realistic virtual try-ons is notoriously hard. Traditional systems often stretch or distort clothing to fit models, making everything look… off. Developers and product teams trying to build seamless try-on experiences are stuck with complicated pipelines and underwhelming results.

OOTDiffusion changes the game. Powered by latent diffusion models, it creates high-quality, natural-looking images of people wearing virtual outfits—without any manual tweaking or awkward visual glitches.

Whether you’re building an online shopping platform, a fashion content engine, or a personalized stylist app, OOTDiffusion gives you the control and realism you’ve been missing.

Key Features at a Glance

- Hyper-Realistic Output

Clothes look like they belong on the person—not photoshopped on.

- No Stretching, No Warping

OOTDiffusion preserves the original proportions of garments while still fitting them perfectly.

- Built-in Customization Tools

Fine-tune how the clothes appear using integrated controls—no external editing required.

Under the Hood: How It Works

OOTDiffusion uses a sophisticated pipeline to turn static images into lifelike try-on visuals.

Here’s what powers it:

-

VAE Encoder & Decoder

Translates images into machine-understandable data and reconstructs them with high fidelity. -

Outfitting UNet

Extracts and preserves important clothing details like texture, color, and style. -

Denoising UNet

Smoothly merges the clothing with the person’s photo, eliminating harsh edges or mismatches. -

Outfitting Fusion

Aligns the outfit with the body shape to ensure a perfect virtual fit. -

Outfitting Dropout

Adds stylistic variety and prevents repetitive or overfitted results.

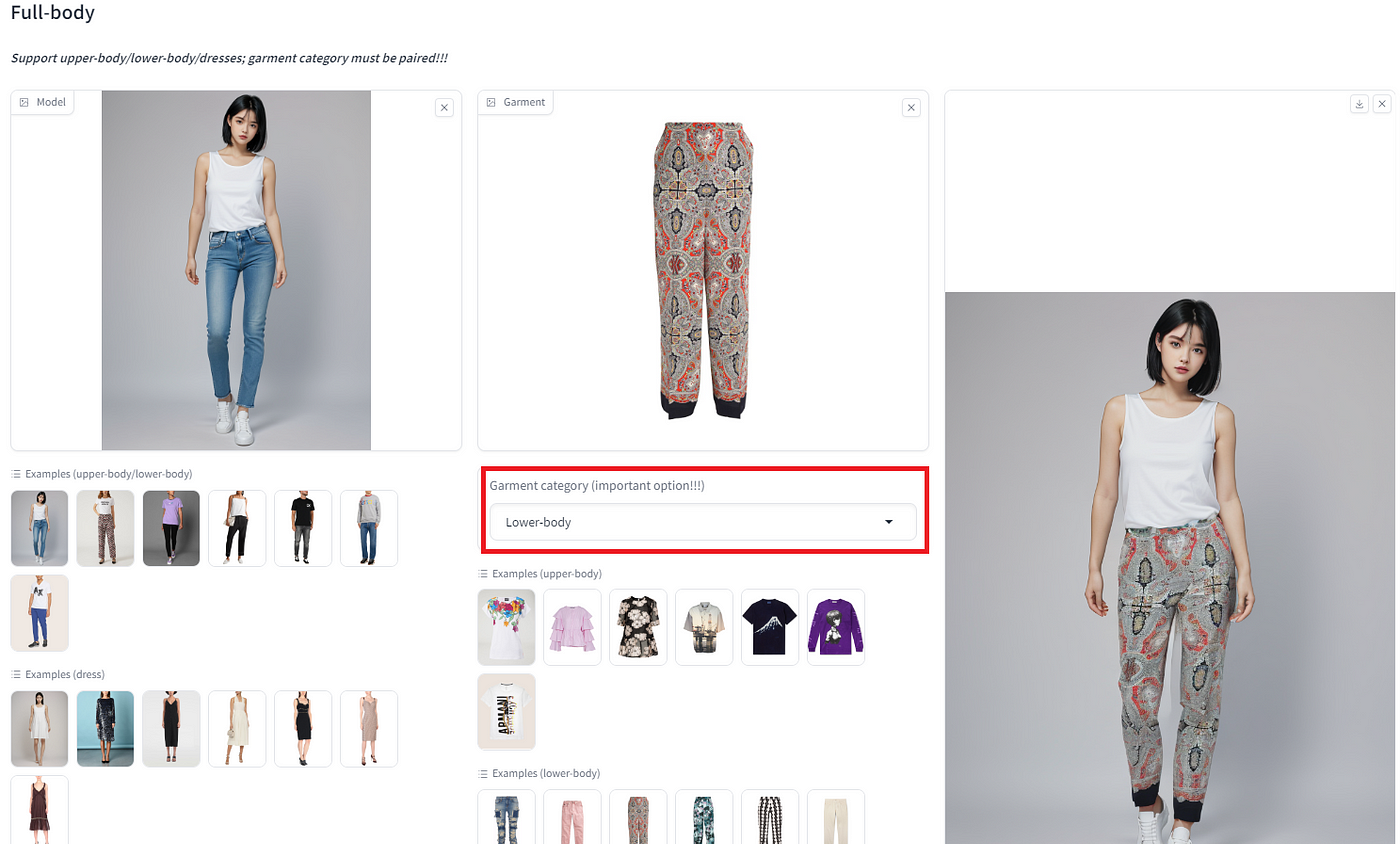

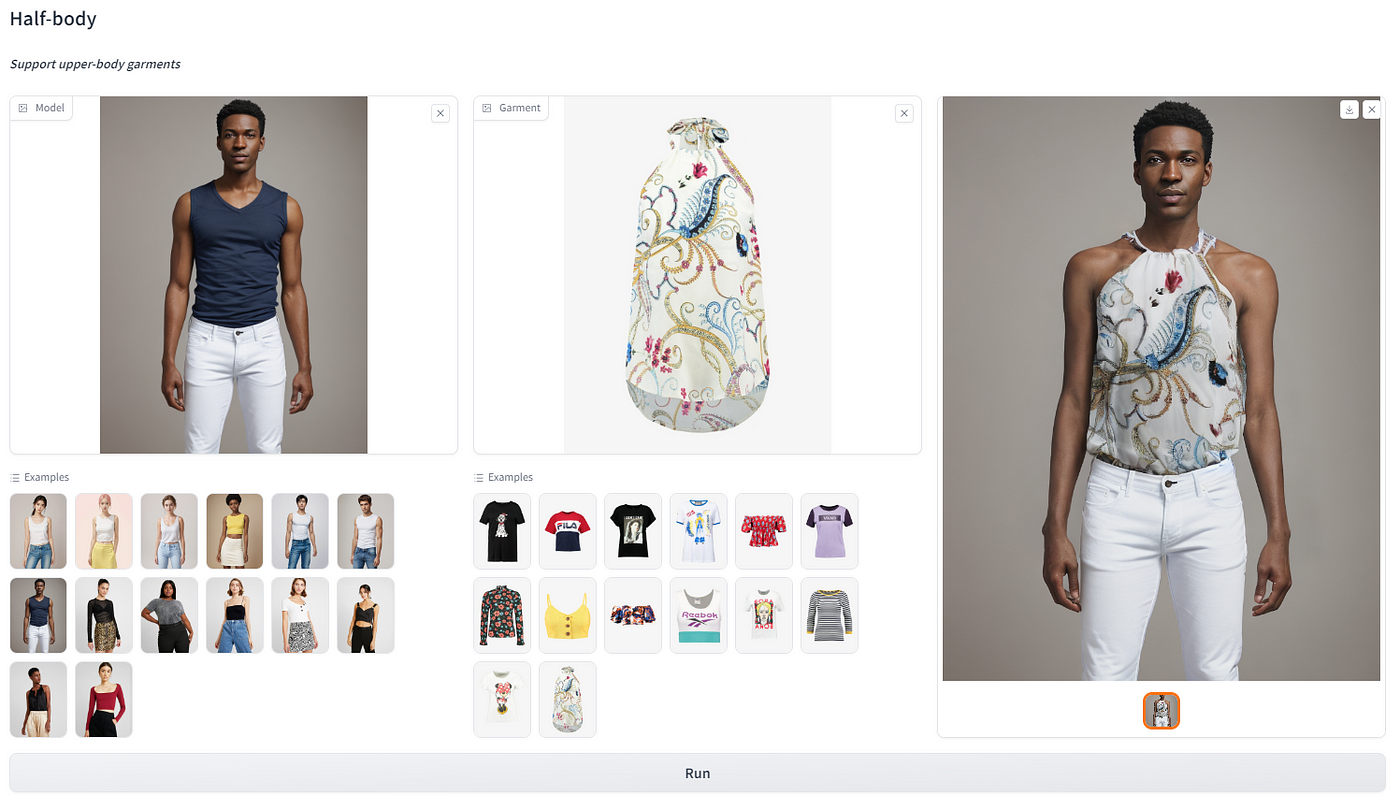

Steps to Creating a Virtual Try-On

Virtual try-on has evolved from a gimmick into a serious tech play that blends computer vision, machine learning, and creativity. But what exactly happens behind the scenes? Here’s a simplified walk-through of the process that transforms raw images into polished, photorealistic try-on experiences.

1. Preparing the Inputs: Person + Outfit

Everything starts with two key images:

-

A photo of the person (user or model)

-

A photo of the clothing item (shirt, dress, jacket, etc.)

Once both images are uploaded, the system identifies where the outfit will be placed—typically on the upper body or full-body area. It masks out the original clothing on the person’s image, effectively creating a digital “blank canvas” for the new look. This step is crucial to avoid visual overlap or awkward blending.

2. Extract Key Clothing Features

Next, the system analyzes the clothing image to understand all the nuances:

-

Fabric texture (is it cotton, silk, leather?)

-

Color gradients and patterns (stripes, florals, prints)

-

Structure and fit (tight vs. loose, sleeve shape, neckline)

This isn’t just about copying and pasting the tech needs to learn how the clothing behaves in the real world so it can simulate folds, drapes, and depth accurately.

3. Merging the Clothing with the Person

With both the person and the outfit digitally understood, the system now performs the core magic: fusion.

Using advanced tools like pose estimation, body part alignment, and warping algorithms, the clothing is fitted onto the user’s body. These tools help adjust the garment’s shape and scale, making sure the sleeves, hems, and seams fall exactly where they should.

The goal is to make it look like the user is actually wearing the item—not like a sticker slapped on top.

4. Post-Processing & Refinement

Once the merged image is ready, the system goes through a clean-up phase. This includes:

• Removing artifacts or jagged edges

• Blending lighting and shadows

• Smoothing out transitions between skin, fabric, and background

This step ensures the final output feels photorealistic and studio-grade, ready to be used in apps, e-commerce platforms, or social sharing. The end result? A polished, high-resolution image of the person wearing the selected outfit, tailored to their pose and body shape. It’s fast, scalable, and increasingly indistinguishable from a real photograph.

Innovations That Set It Apart

FusionTailors isn’t just another virtual fashion tool—it’s a reimagination of what digital outfitting can be. It’s loaded with intelligent systems designed to cut the noise, reduce manual effort, and deliver high-quality personalization at scale.

Outfitting FusionTailors: Precision Fit, Zero Fuss

Traditionally, virtual try-ons struggle with generalization—what works for one body type often looks awkward on another. FusionTailors breaks that mold by automatically adapting garments to any body shape, eliminating the need for manual adjustment or intervention. It understands the nuances of human contours and tailors each outfit with mathematical precision. Whether you’re tall, petite, curvy, or lean, the end result always looks tailor-made.

Outfitting Dropout: Infinite Style, No Repeats

One of the pain points in generative fashion is redundancy—models often produce repetitive, uninspired outputs. Enter Outfitting Dropout. By intelligently introducing randomness during generation, it injects fresh variations in style, pose, and expression. This ensures your virtual wardrobe never feels like déjà vu. It’s the difference between a static catalog and a living, evolving fashion assistant.

Where It Shines

FusionTailors isn’t just a lab experiment—it’s built for real-world impact across several fast-moving industries. Here’s where it truly earns its stripes:

E-Commerce Virtual Try-On

Imagine letting shoppers see how clothes look on them, not a default mannequin. FusionTailors allows retailers to offer body-aware virtual try-ons with zero friction. This isn’t just about convenience—it’s about reducing return rates, increasing buyer confidence, and creating a more inclusive shopping experience.

Personalized Fashion Recommendations

Thanks to its fine-tuned understanding of body geometry and styling diversity, FusionTailors can act as a real-time fashion recommender. It understands what flatters the user’s figure, predicts style preferences, and offers smarter, more relevant outfit suggestions.

Social Media & Ad Content Generation

Need an endless stream of fresh, styled visuals for marketing? FusionTailors becomes your content engine. Brands can generate photorealistic, on-trend outfit combinations that are tailored to different demographics—perfect for ads, lookbooks, influencer campaigns, and more.

Digital Stylists and Smart Mirrors

In futuristic retail setups and luxury stores, smart mirrors powered by FusionTailors can provide interactive styling experiences. Think AI-based assistants that don’t just show clothes, but advise, adapt, and elevate the entire shopping journey in real time.

Conclusion

If you’re building the future of digital fashion, OOTDiffusion is the secret weapon your stack’s been missing. It’s fast, smart, and ridiculously easy to integrate—bringing runway-level realism to your virtual try-ons.

Have any questions or need help? Drop a comment below. We’d love to hear your thoughts!

Related Articles

Explore more perspectives on AI strategy, product development, and engineering.

2023-02-26

Animating the Web with Lottie: Best Practices for Optimization

The article suggests using the useEffect hook to control animation rendering, the useMemo hook to optimize rendering, and the useLazyLottie hook to lazy-load animati...

2017-05-30

Creating a simple Chatbot in Salesforce lightning using API.AI in less than 60 mins

In this article, I have explained on how to build your own Chatbot in API.AI and use it in Salesforce lightning.

2023-04-05

Delegation & Empowerment: Balancing Leadership for Team Success

Unlock your team's potential with effective delegation strategies. Balance leadership and autonomy, overcome challenges, and foster a thriving work environment for g...

Ready to Turn Ideas into a Roadmap?

If this article sparked opportunities for your product or organisation, let's turn them into a concrete plan with timelines and outcomes.

Schedule a Consultation